Abadie, Alberto, and Javier Gardeazabal. 2003. “The Economic Costs of Conflict: A Case Study of the Basque Country.” American Economic Review 93 (1): 113–32.

Albert, Christopher G, and Katharina Rath. 2020. “Gaussian Process Regression for Data Fulfilling Linear Differential Equations with Localized Sources.” Entropy 22 (2): 152.

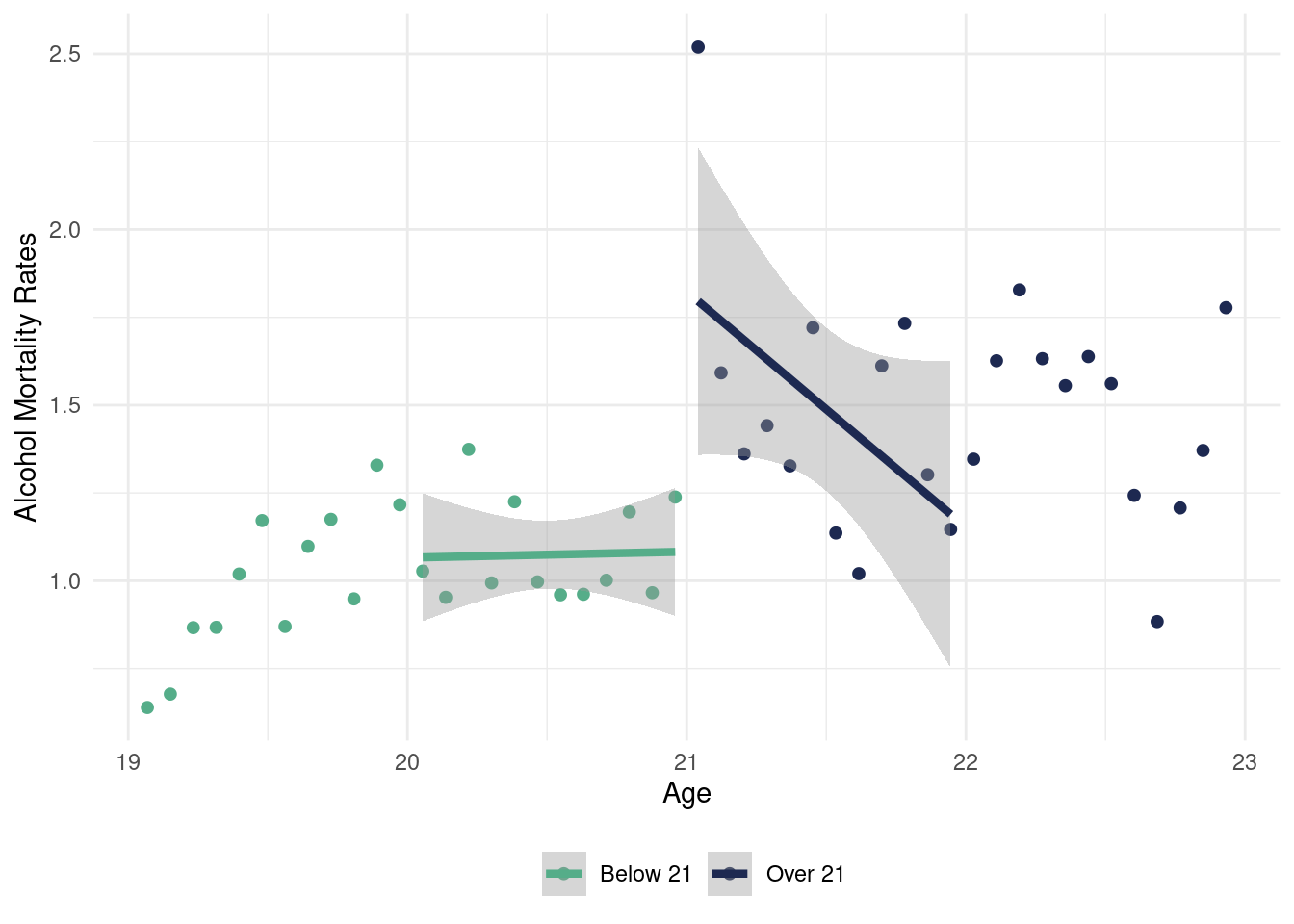

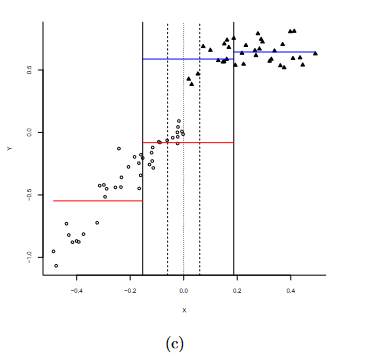

Alcantara, Rafael, P Richard Hahn, Carlos Carvalho, and Hedibert Lopes. 2025. “Learning Conditional Average Treatment Effects in Regression Discontinuity Designs Using Bayesian Additive Regression Trees.” arXiv Preprint arXiv:2503.00326.

Alcantara, Rafael, Meijia Wang, P Richard Hahn, and Hedibert Lopes. 2024. “Modified BART for Learning Heterogeneous Effects in Regression Discontinuity Designs.” arXiv Preprint arXiv:2407.14365.

Ben-Michael, Eli, David Arbour, Avi Feller, Alexander Franks, and Steven Raphael. 2023. “Estimating the Effects of a California Gun Control Program with Multitask Gaussian Processes.” The Annals of Applied Statistics 17 (2): 985–1016.

Besginow, Andreas, and Markus Lange-Hegermann. 2022. “Constraining Gaussian Processes to Systems of Linear Ordinary Differential Equations.” Advances in Neural Information Processing Systems 35: 29386–99.

Binois, Mickaël, and Robert B Gramacy. 2021. “Hetgp: Heteroskedastic Gaussian Process Modeling and Sequential Design in r.”

Card, D., and A. B. Krueger. 1994.

“Minimum Wages and Employment: A Case Study of the Fast-Food Industry in New Jersey and Pennsylvania.” American Economic Review 84 (4): 772–93.

https://www.jstor.org/stable/2118030.

Chen, Ricky TQ, Yulia Rubanova, Jesse Bettencourt, and David K Duvenaud. 2018. “Neural Ordinary Differential Equations.” Advances in Neural Information Processing Systems 31.

Chipman, Hugh A, Edward I George, Robert E McCulloch, and Thomas S Shively. 2022. “mBART: Multidimensional Monotone BART.” Bayesian Analysis 17 (2): 515–44.

Chipman, Hugh, Edward George, Richard Hahn, Robert McCulloch, Matthew Pratola, and Rodney Sparapani. 2014. “Bayesian Additive Regression Trees, Computational Approaches.” Wiley StatsRef: Statistics Reference Online, 1–23.

Cox, David R. 1972. “Regression Models and Life-Tables.” Journal of the Royal Statistical Society: Series B (Methodological) 34 (2): 187–202.

Dahl, Benjamin K, Matthew J Heaton, Richard L Warr, Jared D Fisher, and Grant G Schultz. 2024. “Modeling Crash Risk on Roadway Networks Using Bayesian Regression Trees.” Technometrics, no. just-accepted: 1–17.

Deshpande, Sameer K. 2024. “flexBART: Flexible Bayesian Regression Trees with Categorical Predictors.” Journal of Computational and Graphical Statistics, no. just-accepted: 1–18.

Deshpande, Sameer K, Ray Bai, Cecilia Balocchi, Jennifer E Starling, and Jordan Weiss. 2020. “VCBART: Bayesian Trees for Varying Coefficients.” arXiv Preprint arXiv:2003.06416.

Gaffney, Jim A, Lin Yang, and Suzanne Ali. 2022. “Constraining Model Uncertainty in Plasma Equation-of-State Models with a Physics-Constrained Gaussian Process.” arXiv Preprint arXiv:2207.00668.

Gramacy, Robert B, and Herbert K H Lee. 2008. “Bayesian Treed Gaussian Process Models with an Application to Computer Modeling.” Journal of the American Statistical Association 103 (483): 1119–30.

Hahn, P Richard, Indranil Goswami, and Carl F Mela. 2015. “A Bayesian Hierarchical Model for Inferring Player Strategy Types in a Number Guessing Game.”

Hahn, P Richard, and Andrew Herren. 2022. “Feature Selection in Stratification Estimators of Causal Effects: Lessons from Potential Outcomes, Causal Diagrams, and Structural Equations.” arXiv Preprint arXiv:2209.11400.

Hahn, P Richard, Jared S Murray, and Carlos M Carvalho. 2020. “Bayesian Regression Tree Models for Causal Inference: Regularization, Confounding, and Heterogeneous Effects (with Discussion).” Bayesian Analysis 15 (3): 965–1056.

Hahn, P. R., D. Puelz, J. He, and C. M. Carvalho. 2016.

“Regularization and Confounding in Linear Regression for Treatment Effect Estimation.” Bayesian Analysis.

https://doi.org/10.1214/16-BA1044.

Han, Lifeng, Changhan He, Huy Dinh, John Fricks, and Yang Kuang. 2022. “Learning Biological Dynamics from Spatio-Temporal Data by Gaussian Processes.” Bulletin of Mathematical Biology 84 (7): 69.

He, Jingyu, and P Richard Hahn. 2023. “Stochastic Tree Ensembles for Regularized Nonlinear Regression.” Journal of the American Statistical Association 118 (541): 551–70.

Herren, Andrew, and P Richard Hahn. 2020. “Semi-Supervised Learning and the Question of True Versus Estimated Propensity Scores.” arXiv Preprint arXiv:2009.06183.

Hill, Jennifer L. 2011. “Bayesian Nonparametric Modeling for Causal Inference.” Journal of Computational and Graphical Statistics 20 (1): 217–40.

Jidling, Carl, Niklas Wahlström, Adrian Wills, and Thomas B Schön. 2017. “Linearly Constrained Gaussian Processes.” Advances in Neural Information Processing Systems 30.

Kalbfleisch, JD, and RL Prentice. 1980. “Failure Time Models.” In The Statistical Analysis of Failure Time Data, 21–38. John Wiley, New York.

Kaplan, Edward L, and Paul Meier. 1958. “Nonparametric Estimation from Incomplete Observations.” Journal of the American Statistical Association 53 (282): 457–81.

Krantsevich, Chelsea, P Richard Hahn, Yi Zheng, and Charles Katz. 2023. “Bayesian Decision Theory for Tree-Based Adaptive Screening Tests with an Application to Youth Delinquency.” The Annals of Applied Statistics 17 (2): 1038–63.

Krantsevich, Nikolay. 2023. “Tree Ensemble Algorithms for Causal Machine Learning.” PhD thesis, Arizona State University.

Krantsevich, Nikolay, Jingyu He, and P Richard Hahn. 2023. “Stochastic Tree Ensembles for Estimating Heterogeneous Effects.” In International Conference on Artificial Intelligence and Statistics, 6120–31. PMLR.

Lange-Hegermann, Markus. 2018. “Algorithmic Linearly Constrained Gaussian Processes.” Advances in Neural Information Processing Systems 31.

Linero, Antonio R. 2022. “SoftBart: Soft Bayesian Additive Regression Trees.” arXiv Preprint arXiv:2210.16375.

Linero, Antonio R, and Yun Yang. 2018. “Bayesian Regression Tree Ensembles That Adapt to Smoothness and Sparsity.” Journal of the Royal Statistical Society Series B: Statistical Methodology 80 (5): 1087–1110.

Lu, Xuetao, and Robert E McCulloch. 2023. “Gaussian Processes Correlated Bayesian Additive Regression Trees.” arXiv Preprint arXiv:2311.18699.

Maia, Mateus, Keefe Murphy, and Andrew C Parnell. 2024. “GP-BART: A Novel Bayesian Additive Regression Trees Approach Using Gaussian Processes.” Computational Statistics & Data Analysis 190: 107858.

McCartan, Cory, and Kosuke Imai. 2023. “Sequential Monte Carlo for Sampling Balanced and Compact Redistricting Plans.” The Annals of Applied Statistics 17 (4): 3300–3323.

McCulloch, Robert E, Rodney A Sparapani, Brent R Logan, and Purushottam W Laud. 2021. “Causal Inference with the Instrumental Variable Approach and Bayesian Nonparametric Machine Learning.” arXiv Preprint arXiv:2102.01199.

Mohan, Arvind, Ashesh Chattopadhyay, and Jonah Miller. 2024. “What You See Is Not What You Get: Neural Partial Differential Equations and the Illusion of Learning.” arXiv Preprint arXiv:2411.15101.

Murray, Jared S. 2021. “Log-Linear Bayesian Additive Regression Trees for Multinomial Logistic and Count Regression Models.” Journal of the American Statistical Association 116 (534): 756–69.

Orlandi, Vittorio, Jared Murray, Antonio Linero, and Alexander Volfovsky. 2021. “Density Regression with Bayesian Additive Regression Trees.” arXiv Preprint arXiv:2112.12259.

Papakostas, Demetrios, P Richard Hahn, Jared Murray, Frank Zhou, and Joseph Gerakos. 2023. “Do Forecasts of Bankruptcy Cause Bankruptcy? A Machine Learning Sensitivity Analysis.” The Annals of Applied Statistics 17 (1): 711–39.

Pratola, Matthew T, Hugh A Chipman, Edward I George, and Robert E McCulloch. 2020. “Heteroscedastic BART via Multiplicative Regression Trees.” Journal of Computational and Graphical Statistics 29 (2): 405–17.

Quiroga, Miriana, Pablo G Garay, Juan M Alonso, Juan Martin Loyola, and Osvaldo A Martin. 2022. “Bayesian Additive Regression Trees for Probabilistic Programming.” arXiv Preprint arXiv:2206.03619.

Raissi, Maziar, and George Em Karniadakis. 2018. “Hidden Physics Models: Machine Learning of Nonlinear Partial Differential Equations.” Journal of Computational Physics 357: 125–41.

Raissi, Maziar, Paris Perdikaris, and George Em Karniadakis. 2017. “Machine Learning of Linear Differential Equations Using Gaussian Processes.” Journal of Computational Physics 348: 683–93.

———. 2018. “Numerical Gaussian Processes for Time-Dependent and Nonlinear Partial Differential Equations.” SIAM Journal on Scientific Computing 40 (1): 172–98.

Ressel, S, JJ Ruby, GW Collins, and JR Rygg. 2022. “Density Reconstruction in Convergent High-Energy-Density Systems Using x-Ray Radiography and Bayesian Inference.” Physics of Plasmas 29 (7).

Rosenbaum, P. R., and D. B. Rubin. 1983. “The Central Role of the Propensity Score in Observational Studies for Causal Effects.” Biometrika 70: 41–55.

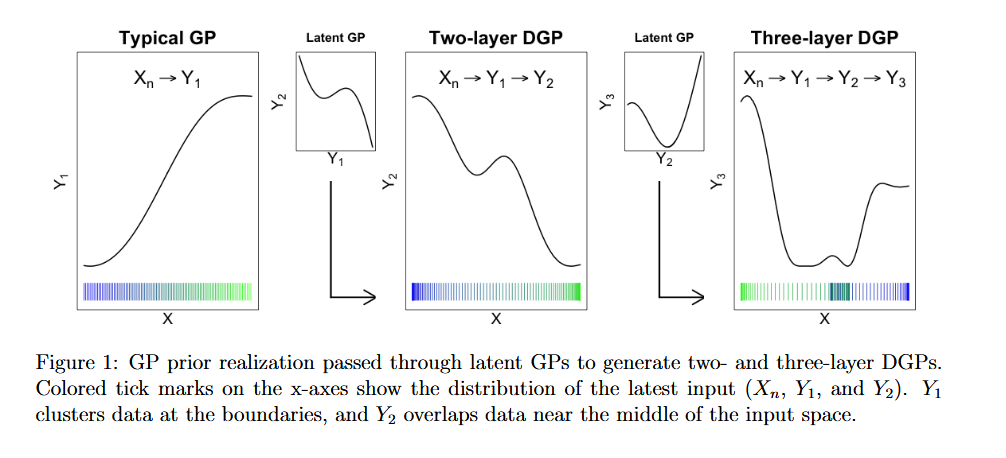

Sauer, Annie, Robert B Gramacy, and David Higdon. 2023. “Active Learning for Deep Gaussian Process Surrogates.” Technometrics 65 (1): 4–18.

Senn, Stephen, Erika Graf, and Angelika Caputo. 2007. “Stratification for the Propensity Score Compared with Linear Regression Techniques to Assess the Effect of Treatment or Exposure.” Statistics in Medicine 26 (30): 5529–44.

Shalizi, Cosma Rohilla, and Andrew C Thomas. 2011. “Homophily and Contagion Are Generically Confounded in Observational Social Network Studies.” Sociological Methods & Research 40 (2): 211–39.

Solak, Ercan, Roderick Murray-Smith, WE Leithead, Douglas Leith, and Carl Rasmussen. 2002. “Derivative Observations in Gaussian Process Models of Dynamic Systems.” Advances in Neural Information Processing Systems 15.

Sparapani, Rodney A, Brent R Logan, Martin J Maiers, Purushottam W Laud, and Robert E McCulloch. 2023. “Nonparametric Failure Time: Time-to-Event Machine Learning with Heteroskedastic Bayesian Additive Regression Trees and Low Information Omnibus Dirichlet Process Mixtures.” Biometrics 79 (4): 3023–37.

Starling, Jennifer E, Jared S Murray, Carlos M Carvalho, Radek K Bukowski, and James G Scott. 2020. “BART with Targeted Smoothing: An Analysis of Patient-Specific Stillbirth Risk.”

Swiler, Laura P, Mamikon Gulian, Ari L Frankel, Cosmin Safta, and John D Jakeman. 2020. “A Survey of Constrained Gaussian Process Regression: Approaches and Implementation Challenges.” Journal of Machine Learning for Modeling and Computing 1 (2).

Tan, Yaoyuan Vincent, and Jason Roy. 2019. “Bayesian Additive Regression Trees and the General BART Model.” Statistics in Medicine 38 (25): 5048–69.

Thal, Dan RC, and Mariel M Finucane. 2023. “Causal Methods Madness: Lessons Learned from the 2022 ACIC Competition to Estimate Health Policy Impacts.” Observational Studies 9 (3): 3–27.

Wahba, Grace. 1973. “A Class of Approximate Solutions to Linear Operator Equations.” Journal of Approximation Theory 9 (1): 61–77.

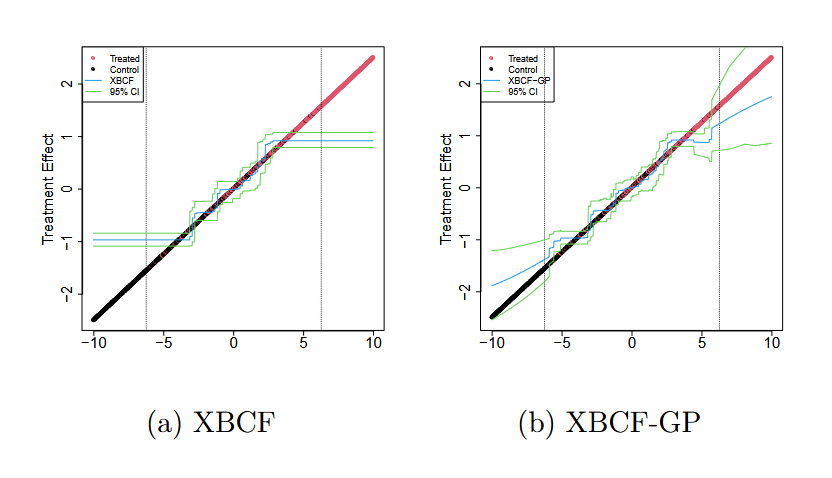

Wang, Meijia, Jingyu He, and P Richard Hahn. 2024. “Local Gaussian Process Extrapolation for BART Models with Applications to Causal Inference.” Journal of Computational and Graphical Statistics 33 (2): 724–35.

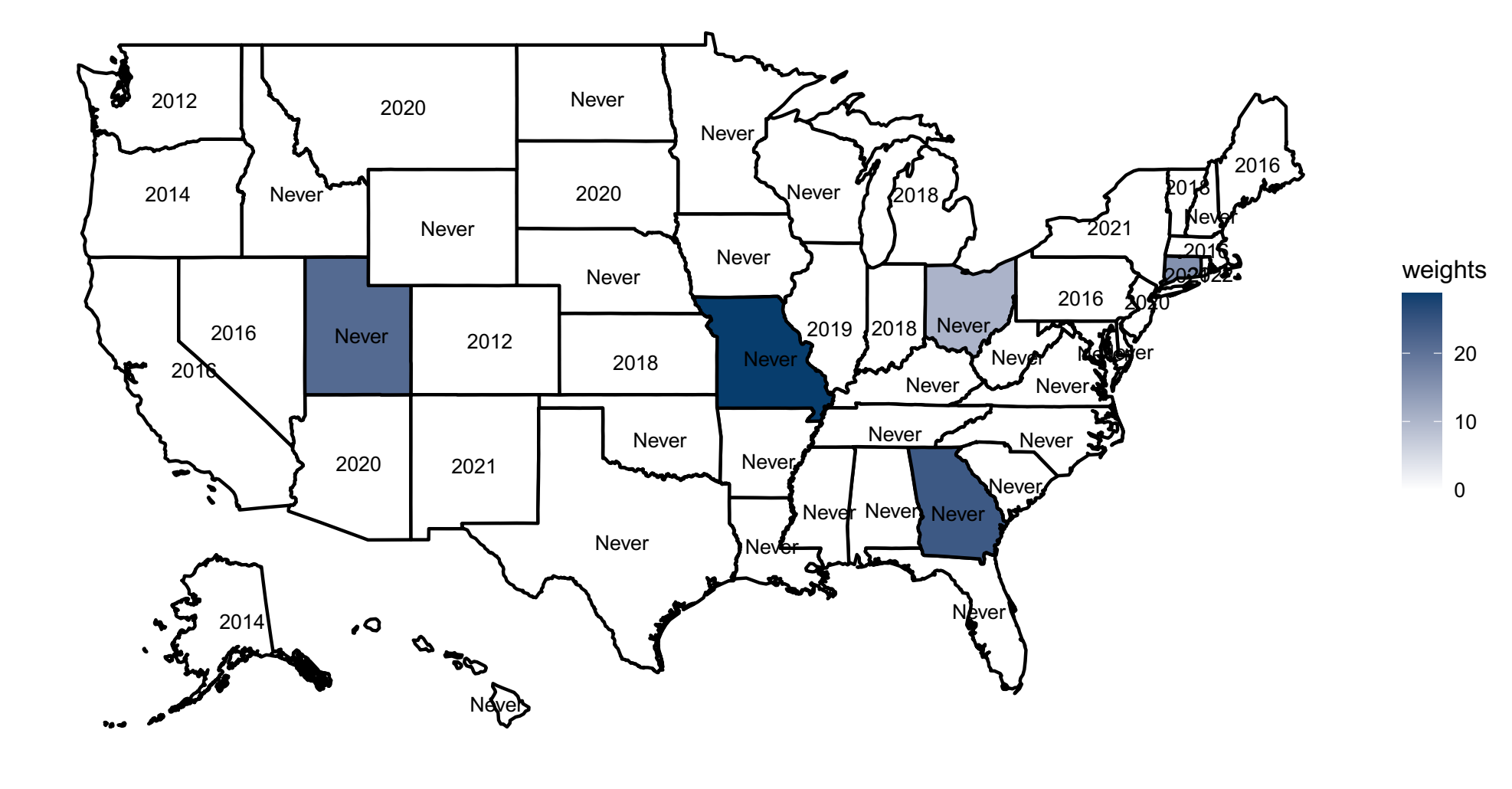

Wang, Meijia, Ignacio Martinez, and P Richard Hahn. 2024. “LongBet: Heterogeneous Treatment Effect Estimation in Panel Data.” arXiv Preprint arXiv:2406.02530.

Williams, Christopher KI, and Carl Edward Rasmussen. 2006. Gaussian Processes for Machine Learning. Vol. 2. 3. MIT press Cambridge, MA.

Yang, Xiu, David Barajas-Solano, Guzel Tartakovsky, and Alexandre M Tartakovsky. 2019. “Physics-Informed CoKriging: A Gaussian-Process-Regression-Based Multifidelity Method for Data-Model Convergence.” Journal of Computational Physics 395: 410–31.

Yang, Xiu, Guzel Tartakovsky, and Alexandre Tartakovsky. 2018. “Physics-Informed Kriging: A Physics-Informed Gaussian Process Regression Method for Data-Model Convergence.” arXiv Preprint arXiv:1809.03461.

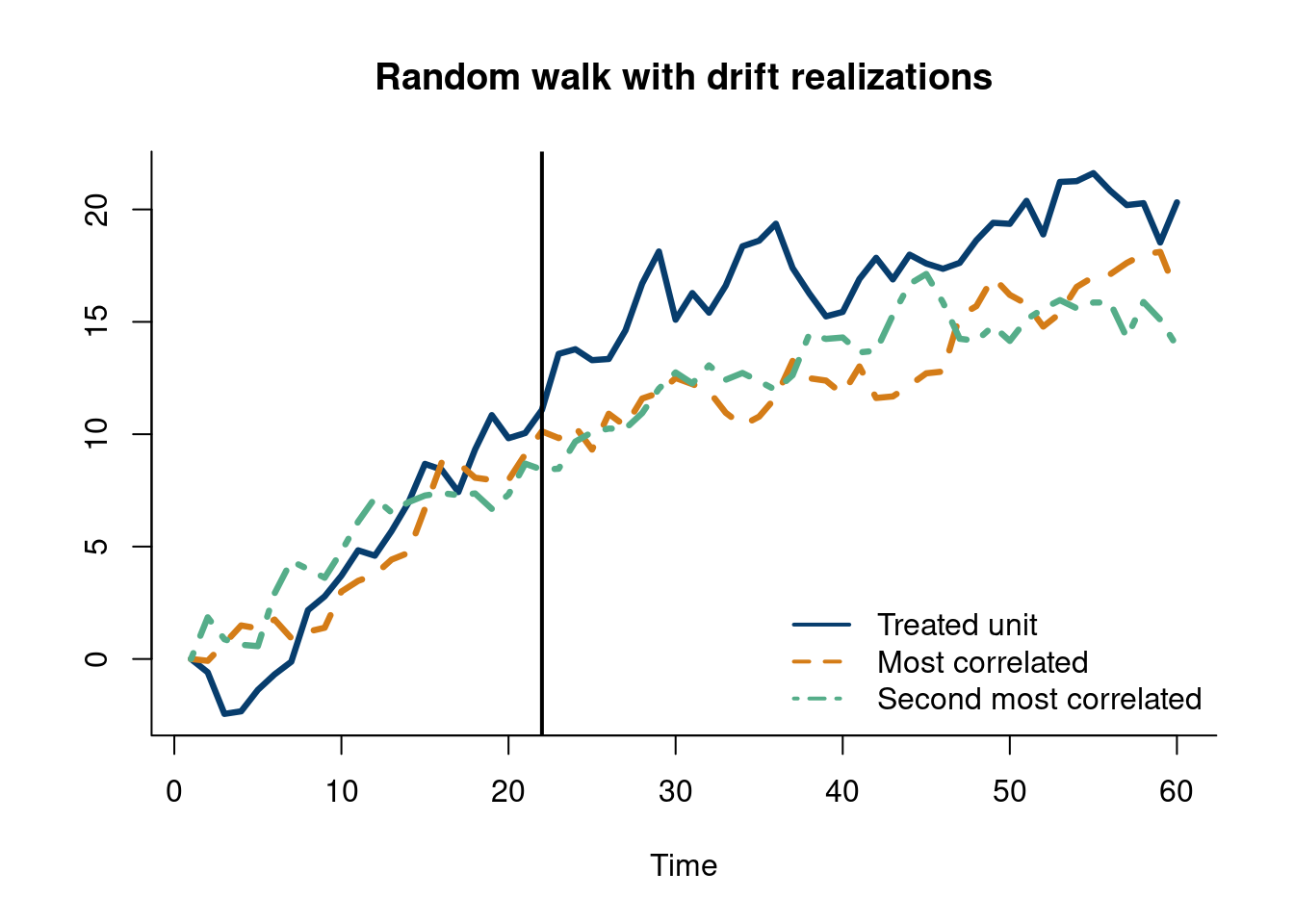

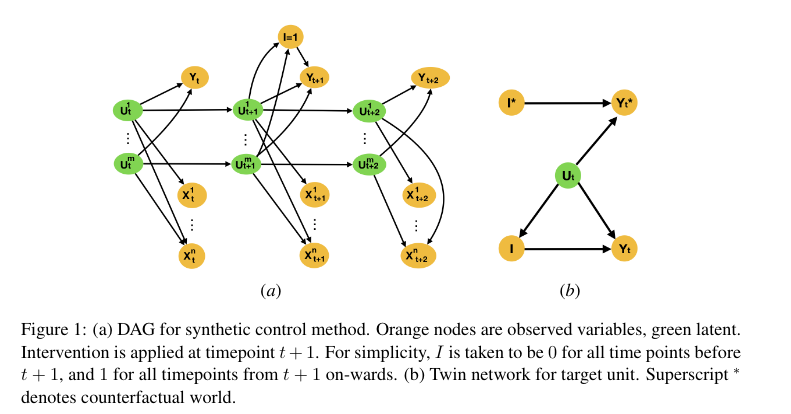

Zeitler, Jakob, Athanasios Vlontzos, and Ciarán Mark Gilligan-Lee. 2023. “Non-Parametric Identifiability and Sensitivity Analysis of Synthetic Control Models.” In Conference on Causal Learning and Reasoning, 850–65. PMLR.